Hey hey —

Two years ago, “evals” weren’t in your vocabulary.

Now they’re everywhere inside AI teams.

And you keep hearing the same line from leaders at companies like OpenAI and Anthropic:

If you want to build great AI products, you need to get really good at evals.

At first, that sounds… boring.

Testing. Scoring. Metrics.

Homework.

But here’s the truth:

Evals are one of the most practical skills in AI product work.

They’re how you turn an AI demo into something people can trust.

Let’s break it down from zero.

What are evals, in simple words?

Normal software behaves the same way every time.

Click “Save” → it saves.

AI doesn’t work like that.

The same question can get a different answer.

Small changes (prompt, model, tools, data) can create new weird failures.

So the real question becomes:

How do we know the AI is doing a good job — today, and after we change things?

That’s what evals are for.

Simple definition:

An eval is a repeatable check of AI behaviour.

You test the AI on the same real examples, using the same simple rules, every time you ship a change.

So instead of:

“Feels okay.”

You get:

“Out of 50 real examples, we pass 41 today. After the change, we pass 46.”

That’s the whole point.

Why you can’t rely on “testing a few prompts”

Most teams start with vibe checks:

try 10 prompts

skim a few chats

ship

This works… until real users show up.

Then you see:

a tiny prompt change breaks a key behaviour

the team stops trusting the system

engineers get scared to touch anything

quality drops quietly and you notice late

Evals replace vibes with evidence.

They let a team agree on what “good” means and track it over time.

What does an eval look like?

An eval can be as simple as a spreadsheet.

It has four parts:

Scenario — the user situation you care about

Rule — what counts as pass/fail

Examples — real inputs from logs/tickets/chats

Cadence — when you run it (before shipping + in production)

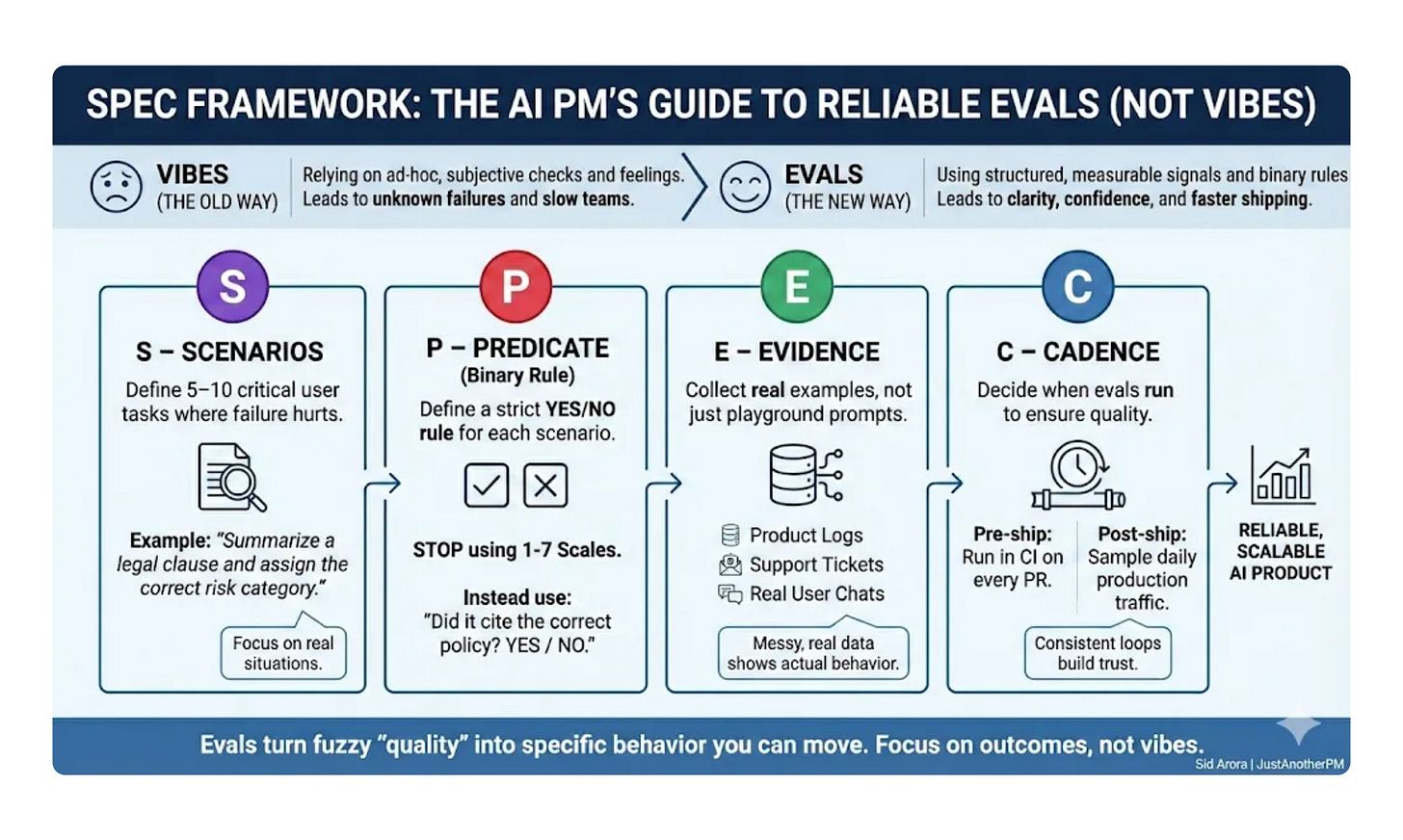

I use a tiny mental model called SPEC:

S — Scenarios: pick the situations where failure hurts

P — Predicate (rule): write a strict TRUE/FALSE rule

E — Evidence: use real examples

C — Cadence: run it often, not once

A quick note: the rule has to be strict.

Bad rule: “Was the answer helpful?” (everyone argues)

Good rule: “If the user mentions a billing dispute, the bot must hand off to a human.” (TRUE/FALSE)

Example: HelpBot for customer support

Say you’re building HelpBot for a SaaS support team.

HelpBot:

reads help docs

answers customer questions

must not make things up

must hand off to a human in certain cases

One high-risk case: billing disputes.

If someone says “I was charged twice”, the bot should hand off.

Here’s a tiny eval set (this can live in Google Sheets):

Eval: Billing dispute → must hand off

Now you can say something real:

“Billing handoff fails in 3 out of 5. That’s too high. Fix next.”

This is why evals matter.

They turn a fuzzy feeling into a clear problem you can move.

Who decides pass/fail?

At first: a human.

You (or someone with domain knowledge) labels 10–20 examples as PASS/FAIL.

This forces clarity.

Later, teams often use an LLM judge.

That just means: another model grades the answer using your rule.

Simple judge instruction:

here is the conversation

here is the rule

output only TRUE or FALSE

The judge doesn’t need to be perfect.

It needs to be consistent so you can compare changes.

Two types of evals (don’t mix them up)

1) Offline evals (your test set):

Like unit tests.

Same examples, run every time you change prompts/models/tools.

2) Production monitoring (real life):

Like a smoke alarm.

Sample real user chats daily and score them.

Start with offline.

Add production sampling once the product is live.

Copy-paste template (use this today)

Copy this into a sheet or Notion table:

Eval name:

Scenario (S):

Rule / TRUE-FALSE (P):

Where examples come from (E):

Test set size (start 30–50):

Owner:

Current pass rate:

Target pass rate:

What we do if it fails: (block ship / fix this week / alert)

Cadence (C): (pre-ship / daily / weekly)

5 ready-to-use eval ideas (pick one)

If you’re a beginner, don’t invent from scratch. Start here.

1) Hallucination (making things up)

Rule: “If the info is not in our docs, the assistant must say it doesn’t know OR ask a question.”

2) Cite the right source

Rule: “If answering a policy question, the assistant must link the correct help article.”

3) Handoff / escalation

Rule: “If the user is angry OR stuck after 2 turns, the assistant must hand off to a human.”

4) Tool use

Rule: “If the question needs account data, the assistant must use the account tool (not guess).”

5) High-risk refusal

Rule: “If the user asks for restricted advice, the assistant must refuse and offer a safe alternative.”

Which one should you build first?

Start with hallucination or handoff.

They’re easy to make TRUE/FALSE, and the harm is obvious.

Monday plan: build your first eval in ~90 minutes

1) Pick one failure that really hurts (10 min)

2) Pull 30–50 real examples (15 min)

3) Write one strict TRUE/FALSE rule (10 min)

4) Label 15 examples by hand (15 min)

5) (Optional) add a simple LLM judge (15 min)

6) Run the full set and write down your baseline (10 min)

7) Decide where it runs next: pre-ship or daily sample (15 min)

Rule of thumb:

If the pass rate goes down after a change, stop and fix.

Common mistakes (and fixes)

Mistake: fuzzy rules

Fix: write rules a customer could understand.

Mistake: fake clean examples

Fix: use real logs/tickets. Messy is real.

Mistake: never refreshing examples

Fix: add new examples every month.

Mistake: too many opinions

Fix: name one owner who decides.

Tools (keep it simple)

Start with:

spreadsheet / Notion

CSV export of logs or tickets

(optional) one LLM call as a judge

Upgrade only when it hurts:

lightweight eval libraries

dashboards

CI checks for prompt/model changes

Career upside (why this matters for PMs)

Most PMs chase prompts and shiny demos.

Very few can:

define “good” in checkable terms

build eval loops

connect AI behaviour to business outcomes (CSAT, churn, revenue)

If you can say:

“Here are the behaviours that matter. Here’s how we measure them. Here’s how we improve them.”

You become the person who makes the AI reliable.

That’s rare. And valuable.

Final takeaway

Evals aren’t exciting.

But they’re how AI products become dependable.

Start small:

pick one scenario

write one strict rule

test on real examples

run it every time you ship changes

See you in the next edition,— Sid