A big thank you to this edition’s sponsor—Shift

Redesign Your Productivity: Build a Custom Browser with Shift

Shift isn’t like any other browser. Drag and drop to build a personalized layout, bring in your favorite apps, and shape your online experience around how you actually work and live.

If you spend five minutes on the engineering side of Twitter (X) right now, you will see the same complaint over and over again.

“I don’t get it.”

You look at the documentation for the Model Context Protocol (MCP).

You see the complex diagrams. You see the “Client-Host-Server” architecture that looks like it was designed in 1995. You see the JSON-RPC messages piping over stdio.

And you think to yourself:

“Why do I need a special protocol just to let my AI talk to my database? Can’t I just write an API call?”

For the longest time, I thought the same thing.

It feels like over-engineering. It feels like “bloat.” It feels like a solution searching for a problem.

But the truth is, if you think MCP is useless, you are looking at it through the lens of a Human Developer.

But MCP wasn’t built for humans.

It was built for the AI Agent that is going to replace your integration code.

Here is why the industry is betting everything on MCP, and why, as a Product Manager, you need to pay attention.

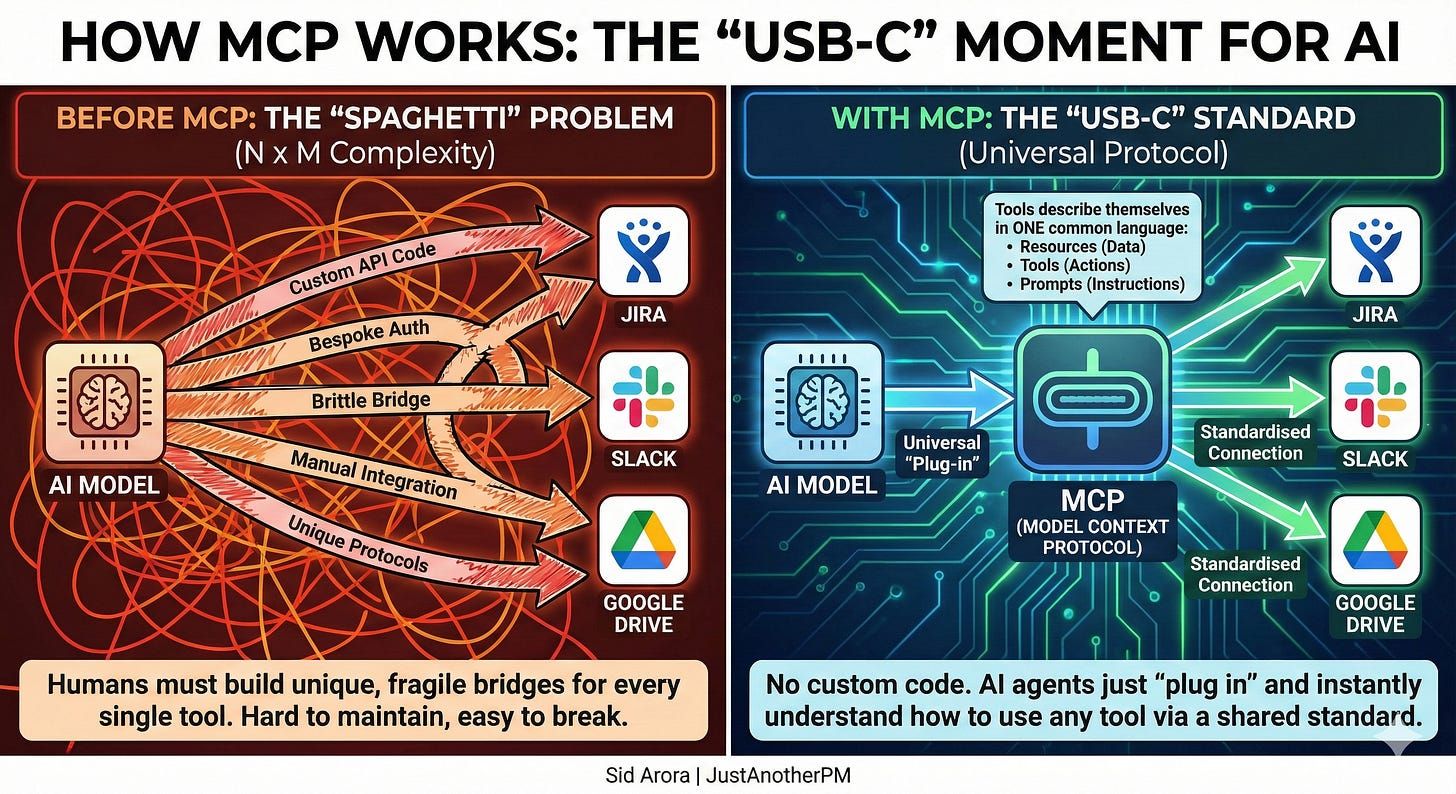

The “Spaghetti” Problem No One Wants To Talk About

Let’s pretend you are a Product Manager building an AI assistant for your company.

You want this assistant to be helpful. You want it to be able to check your calendar, update a Jira ticket, and send a message on Slack.

In the “Old World” (pre-2025), here is what your engineering team had to do:

They had to read the Google Calendar API documentation.

They had to write custom code to authenticate with Google.

They had to read the Jira API documentation.

They had to write custom code to authenticate with Jira.

They had to read the Slack API documentation...

You get the point.

This is what we call “Integration Spaghetti.” Every single tool you want your AI to use requires a bespoke, brittle bridge built by a human hand.

If Jira changes their API? Your bridge breaks.

If you want to switch from OpenAI to Anthropic? Your bridge breaks (because their tool-calling formats are different).

This is the N x M Problem.

If there are N AI models (Claude, GPT-4, Gemini) and M tools (Salesforce, Notion, Linear), you end up with a mathematical explosion of complexity.

And this is where the Model Context Protocol changes the game.

The “USB-C” Moment For AI

The best way to explain MCP to a non-technical stakeholder is to look at your laptop charger. Ten years ago, every device had a different port.

Cameras used Mini-USB.

Androids used Micro-USB.

Laptops used barrel jacks.

Monitors used HDMI.

It was a nightmare.

Then came USB-C.

USB-C didn’t make electricity “better.” It didn’t make data “faster” by magic.

It standardized the connection.

It said: “I don’t care if you are a hard drive, a screen, or a charger. If you plug into this port, we can talk.”

MCP is the USB-C port for Artificial Intelligence.

It forces every digital tool in the world to describe itself in exactly the same way.

Resources: “Here is my data (files, logs, database rows).”

Tools: “Here is what I can do (create ticket, send email).”

Prompts: “Here is how you should talk to me.”

By standardizing this, we move from a world where humans have to write integration code to a world where AIs can just “plug in” to a tool and figure it out instantly.

How It Actually Works

So, we know it’s a “USB-C port.” But what happens when you plug it in?

MCP is built on a simple idea: AI tools shouldn’t be guessing.

Instead of randomly trying to scrape data or call APIs, MCP forces the AI to ask for permission first. It turns a “black box” interaction into a polite, structured conversation.

Think of it like a Library.

The AI Client is the curious Student.

The MCP Server is the strict Librarian.

The Student cannot just run into the stacks and start tearing pages out of books. They have to follow the protocol.

Step 1: The Handshake (Discovery) The Student (AI) walks up to the desk and asks, “What do you have?” The Librarian (Server) hands over a specific catalog: “You can read these Books (Resources), you can use these Study Rooms (Tools), and you can use these Forms (Prompts).”

Step 2: The Selection (Intent) The Student looks at the catalog. If the user says, “Summarize this issue and file a ticket,” the AI decides: “Okay, I need the ‘Get Thread’ book from Slack and the ‘Create Issue’ tool from Jira.”

Step 3: The Execution (Action) The Student fills out the request form exactly as the Librarian demanded. The Librarian checks it, goes to the back room, does the work safely, and brings back the result. The AI never touches the raw database directly.

Step 4: The Response The Student takes the result and tells the user: “Done! I created ticket #3812.”

This flow, Discover, Decide, Act, Respond, is what makes MCP so predictable.

It means you can swap the “Student” (use GPT-4 instead of Claude) or swap the “Library” (use Linear instead of Jira), and the protocol still works perfectly.

But Wait... The Skeptics Are Right (Sort Of)

Now, I know what you are thinking.

“Okay, that sounds great. But why are all the developers complaining?”

Because right now, MCP is inefficient.

If you go to YouTube and watch channels like Prompt Engineering, you will see them tearing MCP apart. They point out a massive flaw called Token Bloat.

Here is how it works:

Your AI connects to an MCP server (say, the “Jira” server).

That server has 50 different tools (Create Ticket, Edit Ticket, Delete Ticket, etc.).

The protocol takes the definitions for all 50 tools and shoves them into the AI’s short-term memory (Context Window).

This is like walking into a restaurant and having the waiter read the entire menu, including the ingredients for every dish, before you can order a glass of water.

It is wasteful.

In fact, research from Anthropic (the creators of MCP!) showed that a “Code-First” approach, where the AI just writes Python code to talk to an API, is 98.7% more efficient than using standard MCP tools.

98.7%.

That is a staggering number.

So, if MCP is 98% less efficient than just letting the AI write code, why are Google, Microsoft, and everyone else adopting it?

The Boring Truth: Governance Wins

The reason MCP “won” isn’t technical.

It’s political.

Imagine you are the CIO of a massive Fortune 500 bank.

Your developers come to you and say: “Hey, we want to let this AI agent write its own Python code to interact with our customer database. It’s 98% more efficient!”

Do you know what you would say?

“Absolutely not.”

Letting an AI write and execute arbitrary code on your servers is a security nightmare. It is the “Wild West”.

Enter MCP.

MCP allows that same CIO to say:

“Okay, you can connect the AI to the database. But ONLY via this specific MCP Server. And that server ONLY allows these 3 specific actions: ‘Read Balance’, ‘View Transaction’, and ‘Freeze Account’.”

It puts the AI in a Sandbox. It gives the enterprise control. The value of MCP isn’t that it’s the fastest way to build. The value is that it is the safest way to scale.

The “Network Effect” You Can’t Ignore

There is one final reason you need to care about this.

Laziness.

(Or, more professionally: “Velocity.”)

In early 2025, something shifted.

Replit and Cursor added native support.

Suddenly, the ecosystem exploded.

Today, if you want your product to integrate with Notion, Slack, Postgres, and Google Drive, you don’t need to hire a team of backend engineers to build those four integrations.

You just download the pre-built MCP Servers for those tools.

Boom. Done.

Your AI can now talk to all of them.

This is the power of a standard.

Because everyone agreed to speak the same language, the “cost” of adding a new integration dropped from Weeks to Minutes.

How To Use This Information

So, what do you actually do with this?

You don’t need to learn how to write a JSON-RPC handler. But you do need to change your product roadmap.

1. Stop building custom “Connectors.”If you are building a SaaS product, do not waste time building a bespoke “Zapier-style” integration layer. Build an MCP Server for your product instead. If you have an MCP server, any AI agent can use your product.

2. Look for the “Gateway.” The next big opportunity is in managing these connections. As a PM, think about “User Permissions.” How do you ensure the AI doesn’t delete a file it shouldn’t? This is the new frontier of User Experience.

3. Ignore the “Bloat” complaints. Yes, the engineers are right. It is inefficient right now. But optimization always follows standardization. (Remember how slow the early Web was?). The protocol will get better. Do not bet against the standard just because version 1.0 is heavy.

The Final Takeaway

I understand why MCPs might look like a step backward.

It looks like we are taking the infinite creativity of AI and forcing it into a boring, rigid, bureaucratic box.

But that is exactly the point.

To go from a cool demo to a global infrastructure, AI needed a boring, rigid box.

It needed a USB-C port.

MCP is that port.

And now that we have it, the real building can finally begin.

That is it for today. Talk soon,—Sid