1,000+ Proven ChatGPT Prompts That Help You Work 10X Faster

ChatGPT is insanely powerful.

But most people waste 90% of its potential by using it like Google.

These 1,000+ proven ChatGPT prompts fix that and help you work 10X faster.

Sign up for Superhuman AI and get:

1,000+ ready-to-use prompts to solve problems in minutes instead of hours—tested & used by 1M+ professionals

Superhuman AI newsletter (3 min daily) so you keep learning new AI tools & tutorials to stay ahead in your career—the prompts are just the beginning

LinkedIn has over 1.2 billion members.

Every month, 260 million of them search for jobs, submitting millions of applications.

LinkedIn's job recommendations — the product JUDE powers behind the scenes (source)

Behind the scenes, a recommendation engine decides which jobs appear in your feed, which ones show up first when you search, and which ones get flagged as a strong match.

For years, that engine relied on handcrafted features.

Engineers built taxonomies of job titles, skills, and industries. Smaller ML models extracted signals from profiles and job postings.

Then, they fed these features into ranking models that scored how well a job matched a member. It worked. But it had problems.

The Limits of Handcrafted Features

LinkedIn's previous embedding platform, called Pensieve, used a deep neural network inspired by Deep Structured Semantic Models.

It converted job postings and member profiles into numerical vectors, a list of numbers that saw meaning in a format machines could compare.

Pensieve delivered real improvements. Each iteration produced statistically significant single-digit percentage gains across products. But the system had structural limitations.

First, it depended on smaller ML models to extract features.

These models were inaccurate.

A job title classifier might label "Growth Product Manager" and "Senior PM, Acquisition" differently, even though they describe overlapping roles.

Second, it relied on taxonomies.

These are structured category systems that engineers maintained manually. They had to map all job titles, skills, and industries into predefined buckets.

When new roles emerged, the taxonomy lagged behind reality.

Third, the upstream pipelines were rigid.

Changing how they extracted features meant touching multiple interconnected systems. Innovation was slow. The team knew they needed a different approach.

Instead of building better handcrafted features, they wanted the model itself to understand what a job posting means and what a member's profile represents.

That meant using a large language model.

LinkedIn's recommendation pipeline — JUDE powers the embedding-based retrieval (EBR) stage (source)

From Keywords to Understanding

The core idea behind JUDE (Job Understanding Data Expert) is clear.

Instead of extracting dozens of handcrafted signals from a job posting and feeding them separately into a ranking model, you give the entire text to an LLM and let it produce a single dense vector that catches the full meaning.

An embedding is a way of turning text into coordinates in a mathematical space.

Similar texts end up close together. A job posting for a "Senior Product Manager: Search & Discovery" and a member profile describing ten years of search product experience would produce embeddings that sit near each other in this space.

A posting for a firmware engineer would sit far away.

Compression is the advantage od LLM-generated embeddings.

Instead of maintaining dozens of separate feature extractors, each with its own models and pipelines, one fine-tuned LLM replaces them all.

It reads the raw text and produces a single vector that downstream ranking models consume directly. But there is a catch.

An off-the-shelf LLM understands language in general.

It does not understand LinkedIn's job marketplace, the specific wording, the implicit signals in how people write profiles in different industries, or the patterns that separate a strong match from a weak one.

That needs fine-tuning on LinkedIn's proprietary data.

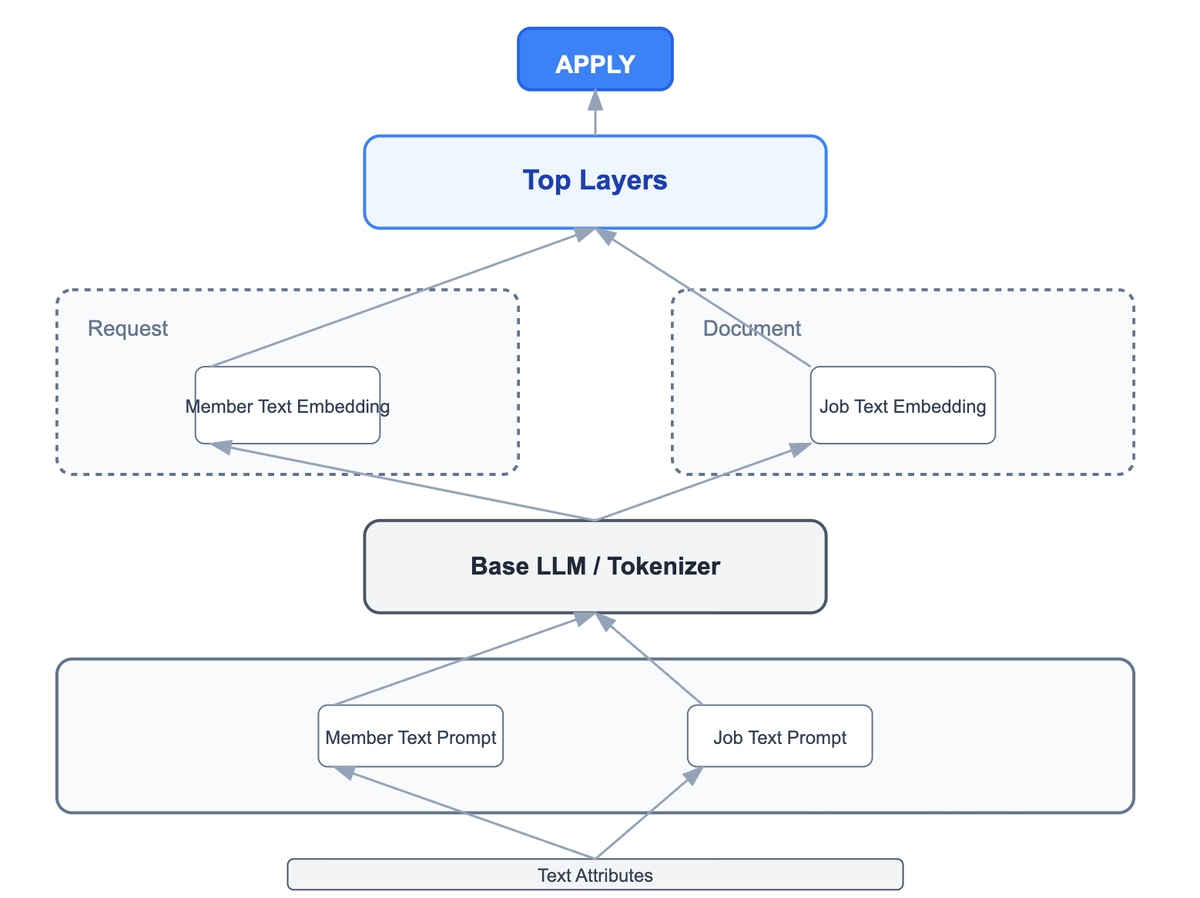

The Two-Tower Architecture

JUDE uses a two-tower model.

Picture two identical buildings standing side by side. One tower processes job postings. The other processes member profiles (or resumes).

Both towers share the same underlying LLM (weights and language understanding), but each gets input from a specific prompt template.

JUDE's two-tower architecture. One LLM, two specialised towers (source)

The job tower might see: "Given this job posting, generate an embedding that catches the role requirements, seniority, and skills needed."

The member tower might see: "Given this member profile, generate an embedding that captures their experience, skills, and career trajectory."

Each tower produces a vector. To check how well a member matches a job, the system measures the distance between the two vectors.

This architecture exists because of a fundamental trade-off.

A more powerful approach, a cross-encoder, would feed both the job posting and the member profile into the model simultaneously.

That allows it to compare every word against every other word.

Cross-encoders are more accurate. And LinkedIn confirmed this in their experiments. But cross-encoders are so expensive at LinkedIn's scale.

With over 15 million active job postings and millions of member profiles, you can't run a 7-billion-parameter model on every possible pair.

The two-tower design solves this by computing each embedding once and then comparing vectors with simple maths. That is the difference between running billions of LLM inferences per query and running one.

To recover some of the accuracy lost by choosing the two-tower approach over the cross-encoder, the team used a distillation technique.

They trained a cross-encoder model offline, where cost doesn't matter, and then used its outputs as a teaching signal for the two-tower model.

It bridged 50% of the performance gap between the two approaches. The two-tower model saw much of the cross-encoder's accuracy without any of its serving cost.

Training a 7B Model on LinkedIn's Data

The team fine-tuned a 7-billion-parameter LLM using LoRA Low-Rank Adaptation.

Rather than updating all 7 billion weights, which requires enormous computing, LoRA freezes the original model and adds small, trainable matrices to the attention layers.

Think of it as teaching the model new skills by adjusting a few dials. This made training feasible on NVIDIA H100 GPUs using multi-node distributed training.

The training data combined two types of signals.

Relevance labels (human-annotated judgements of whether a job matches a member) established baseline quality.

Engagement labels (real application data from millions of job seekers) aligned the model with actual user behavior.

Relevance labels taught the model what a good match is.

Engagement labels taught it what real people actually act on.

This dual-signal approach is important because neither type is sufficient.

If you only train on relevance labels, the model learns what a "correct" match looks like in theory, but might miss the messy reality of how people actually search for jobs.

A PM might apply for a strategy role that no taxonomy would flag as relevant.

If you only train on engagement labels, the model learns what people click on, but click behaviour is noisy and biased toward popular postings.

Combining both gives the model semantic precision and real-world calibration.

The team also engineered a combination of three loss functions to optimise different aspects of embedding quality simultaneously:

One for predicting application probability

One for ensuring similar items cluster together in vector space

One for handling noisy outliers in the data.

Making It Work in Real Time

A job poster expects their listing to show up in recommendations soon.

A member who updates their profile expects to see a change in their feed in seconds. It means the system cannot rely on overnight batch jobs to regenerate embeddings.

JUDE solves this with a streaming architecture built on Apache Kafka and Samza. When a job posting is created or a member profile is updated, the change flows through a Kafka stream.

A dedicated processing pipeline picks it up, runs it through the LLM, and writes the new embedding to a key-value store called Venice.

The entire process, from database change to fresh embedding available for ranking, completes with a p95 latency under 300 milliseconds.

The system includes a smart optimisation: change detection.

Not every profile edit is meaningful.

If a member fixes a typo in their summary, the semantic meaning has not changed, and regenerating the embedding would waste GPU cycles.

A hashing mechanism compares the new input to the previous version and only triggers inference when the change is significant.

It reduces inference volume by approximately six times.

The embeddings flow to two destinations.

For online use, they are written to Venice, LinkedIn's high-performance key-value store, where ranking models can look them up in real time.

For offline use, they are published to Kafka topics and stored in HDFS, where data scientists use them to train and evaluate the next generation of models.

This dual-sink architecture means the same embeddings power both live recommendations and future model improvements. For initial deployment, the team could not wait for real-time events to trickle in for every entity.

They needed embeddings for all existing job postings and member profiles from day one.

A separate batch pipeline relying on Spark and Ray handled this one-time bootstrapping, computing embeddings in bulk across the entire corpus and backfilling the key-value stores.

The Results

JUDE's embeddings replaced the overlapping handcrafted features that had powered LinkedIn's job recommendations for years.

The results from production A/B tests were clear.

Qualified applications increased by 2.07%. The dismiss-to-apply ratio, which measures how often members dismiss a recommendation versus apply, dropped by 5.13%.

Total job applications rose by 1.91%. The team described it as the greatest metric improvement from a single model change they had observed during that period.

These numbers might look modest in isolation. But they are not.

At LinkedIn's scale, with 260 million people searching for jobs every month, a 2% progress in qualified applications means millions of additional strong matches between job seekers and employers every year.

A 5% reduction in the dismiss ratio means members are seeing fewer irrelevant jobs cluttering their feed. The system also simplified LinkedIn's infrastructure.

Instead of maintaining separate feature extraction models, taxonomies, and rigid pipelines, one LLM handles the heavy lifting.

When new roles emerge, roles that no taxonomy would have predicted, the model understands them from the text alone.

What Comes Next

The team is not done. JUDE currently works with text, such as job descriptions, profile summaries, and resume content. But a member's behaviour on LinkedIn carries rich signals that text alone cannot capture.

Which jobs did they click on? Which ones did they save? How long did they spend reading a posting before moving on?

LinkedIn plans to integrate these behavioural signals within JUDE so it can process both text and activity data. It means embeddings also understand what you actually do.